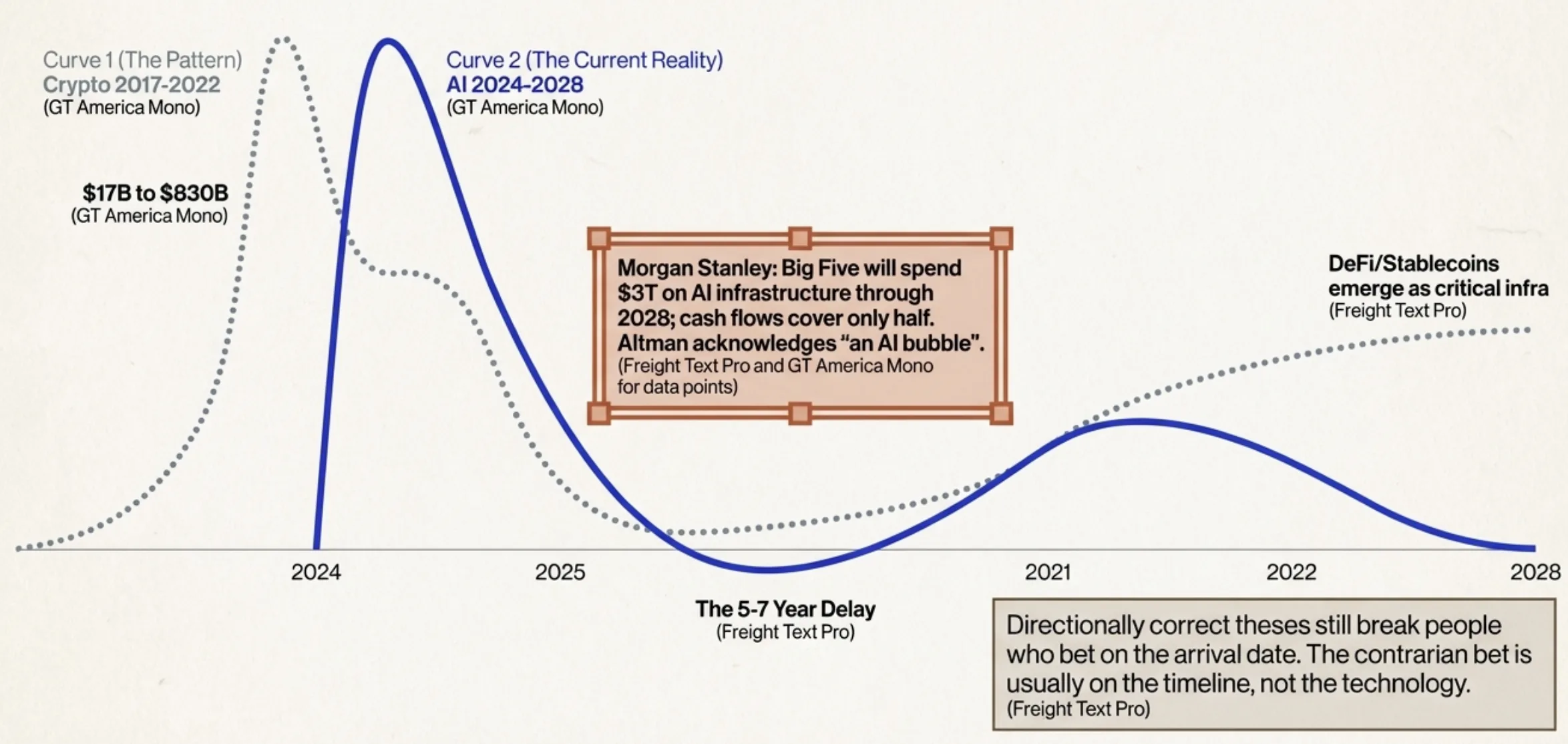

TL;DR Leopold Aschenbrenner's Situational Awareness got the direction of AI progress right and the timeline wrong. The crypto parallel is instructive: directionally correct theses still break people who bet on the arrival date. Build for a world where AI capabilities keep compounding but economic integration moves at institutional speed.

Leopold Aschenbrenner published Situational Awareness: The Decade Ahead in June 2024, arguing that AGI would arrive by 2027, trigger an intelligence explosion and demand a Manhattan Project-scale government response. I've spent thirteen years building through hype cycles: writing production code against Bitcoin's RPC interface in 2012, watching a trillion dollars of speculative value materialize around blockchain, collapse, reconstitute. That pattern recognition is why Aschenbrenner's essay hit different for me. He's not wrong about the trajectory. He's wrong about the physics of how trajectories interact with reality.

The direction was right

The honest assessment: Aschenbrenner got more right than wrong. His three-driver framework (compute scaling, algorithmic efficiency, unhobbling) played out almost exactly as described.

Compute buildout exceeded his projections. Stargate alone: $500 billion committed as of March 2026, with total global AI infrastructure north of $2 trillion planned or underway. The money showed up two to three years ahead of his most aggressive estimates. Algorithmic efficiency held at roughly 0.5 OOM per year, but through mechanisms he didn't predict: test-time compute, chain-of-thought reasoning, mixture-of-experts. DeepSeek's V3 claimed GPT-4-level performance at one-tenth the training compute of Llama 3.1.

Unhobbling turned out to be the dominant driver. The most dramatic gains between June 2024 and March 2026 came not from bigger models but from post-training improvements: reinforcement learning, tool use, agentic scaffolding. Claude Opus 4.6 sustains autonomous work for over 14 hours. Sixteen copies of it wrote a working C compiler in Rust that compiles the Linux kernel for about $20,000. The chatbot-to-agent transition he described is actively happening.

Getting the direction right doesn't mean getting the arrival time right

I learned this in crypto. In 2013, we knew programmable money would reshape financial infrastructure. We were right. We were also eight years early on institutional adoption. The path from "this will be huge" to "this is actually deployed" was nothing like the straight line we imagined.

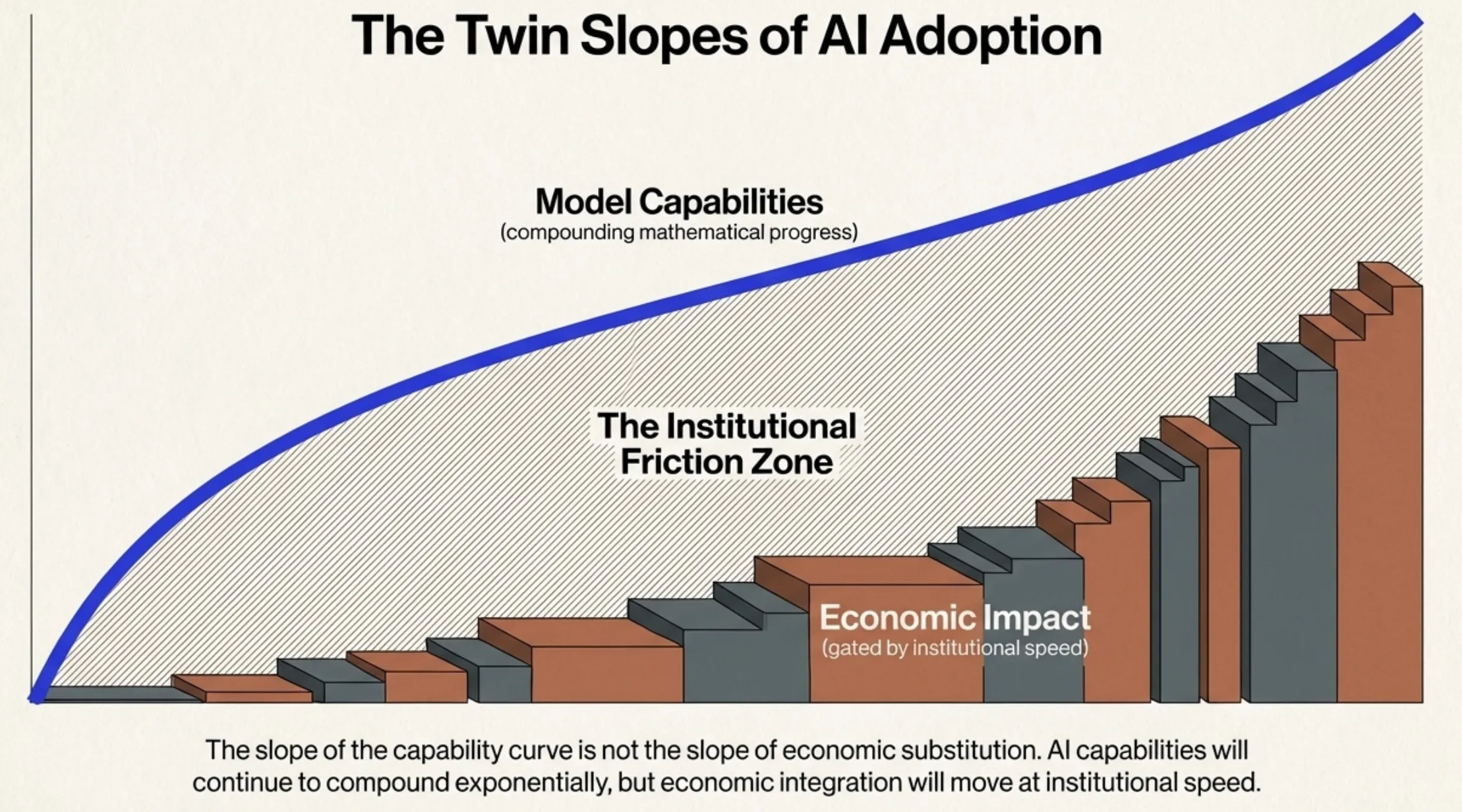

AGI by 2027 looks increasingly unlikely in the form Aschenbrenner described. His benchmark was a "drop-in remote worker" that joins a company, gets onboarded, works independently for weeks. A 14-hour task horizon is not a system that replaces a mid-level engineer for months. GPT-5 scores 74.9% on SWE-bench Verified. Impressive, but not a drop-in replacement for someone who understands organizational context and technical debt. The slope of the capability curve is not the slope of economic substitution. Aschenbrenner consistently conflated the two.

The intelligence explosion hasn't materialized. Models help build their successors (Anthropic used Claude Code to develop its latest systems) but this is tool-assisted research, not autonomous recursive improvement. "AI makes researchers 2x more productive" is not the same claim as "AI replaces the research loop entirely".

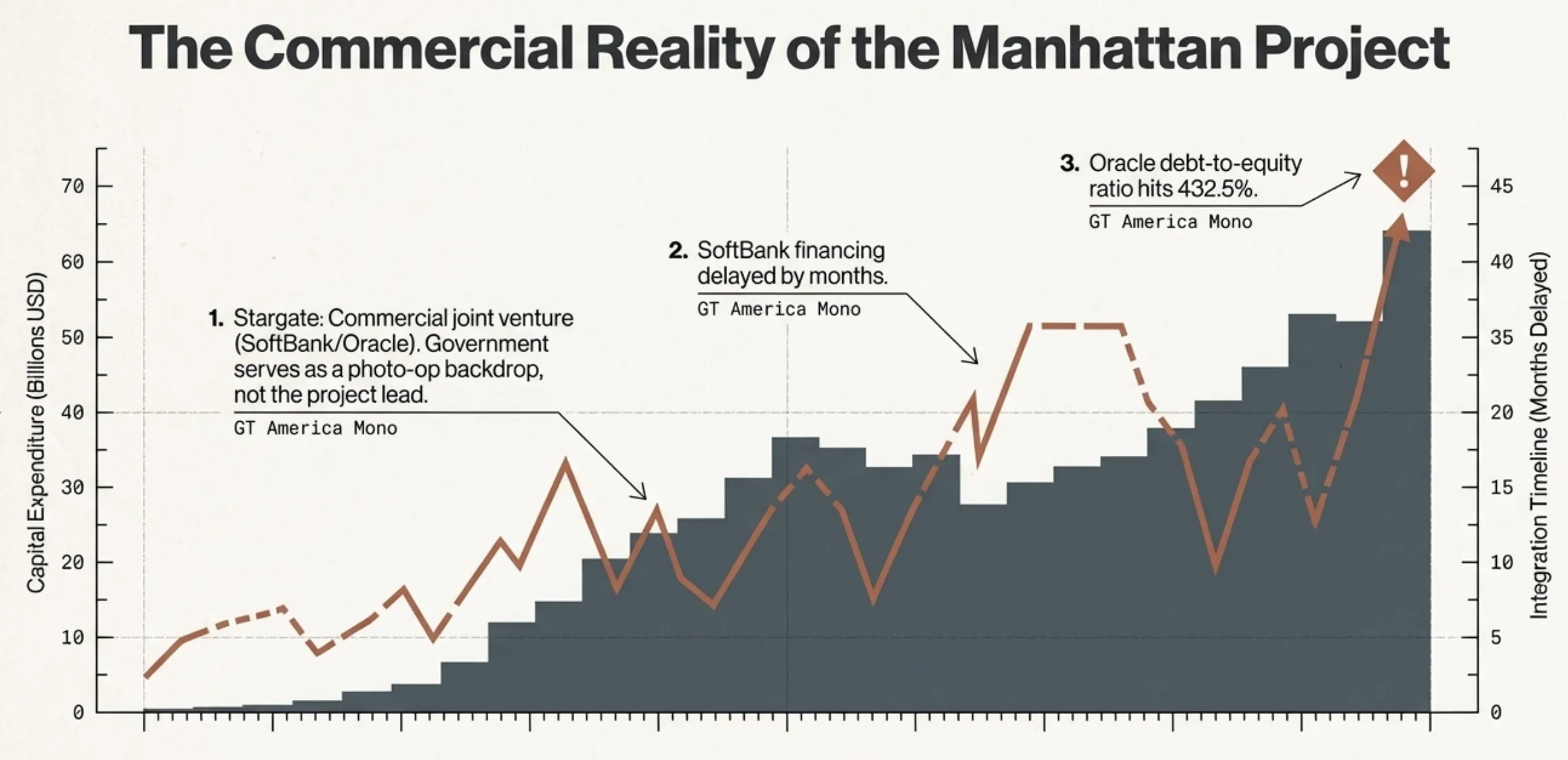

The Project didn't happen either. Instead of a government-led Manhattan Project, we got Stargate: a commercial joint venture funded by SoftBank and Oracle, with the government as photo-op backdrop. SoftBank's financing took months longer than planned. Oracle's debt-to-equity ratio hit 432.5%. This is a leveraged infrastructure bet with geopolitical window dressing.

DeepSeek broke the geopolitical model

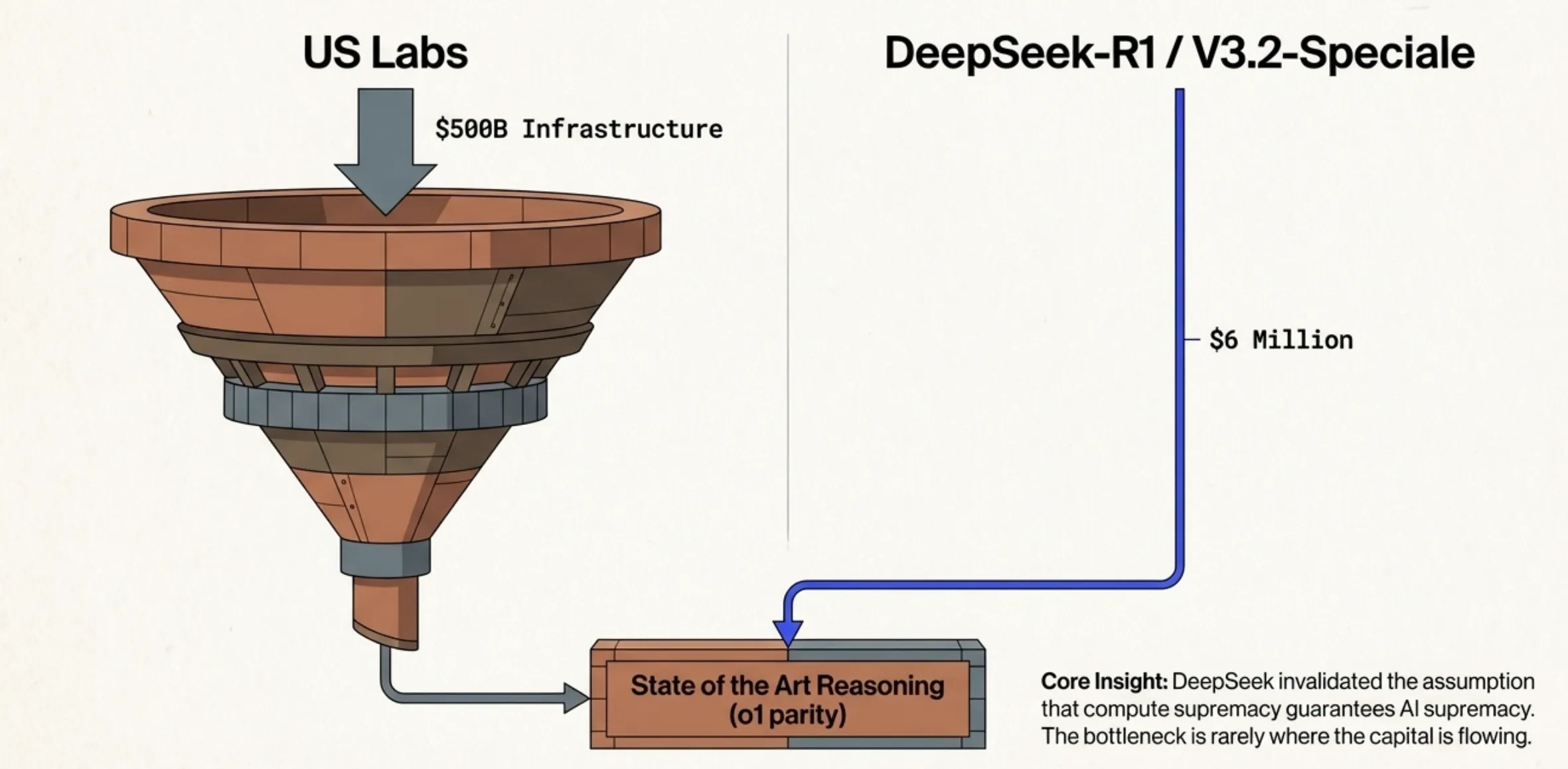

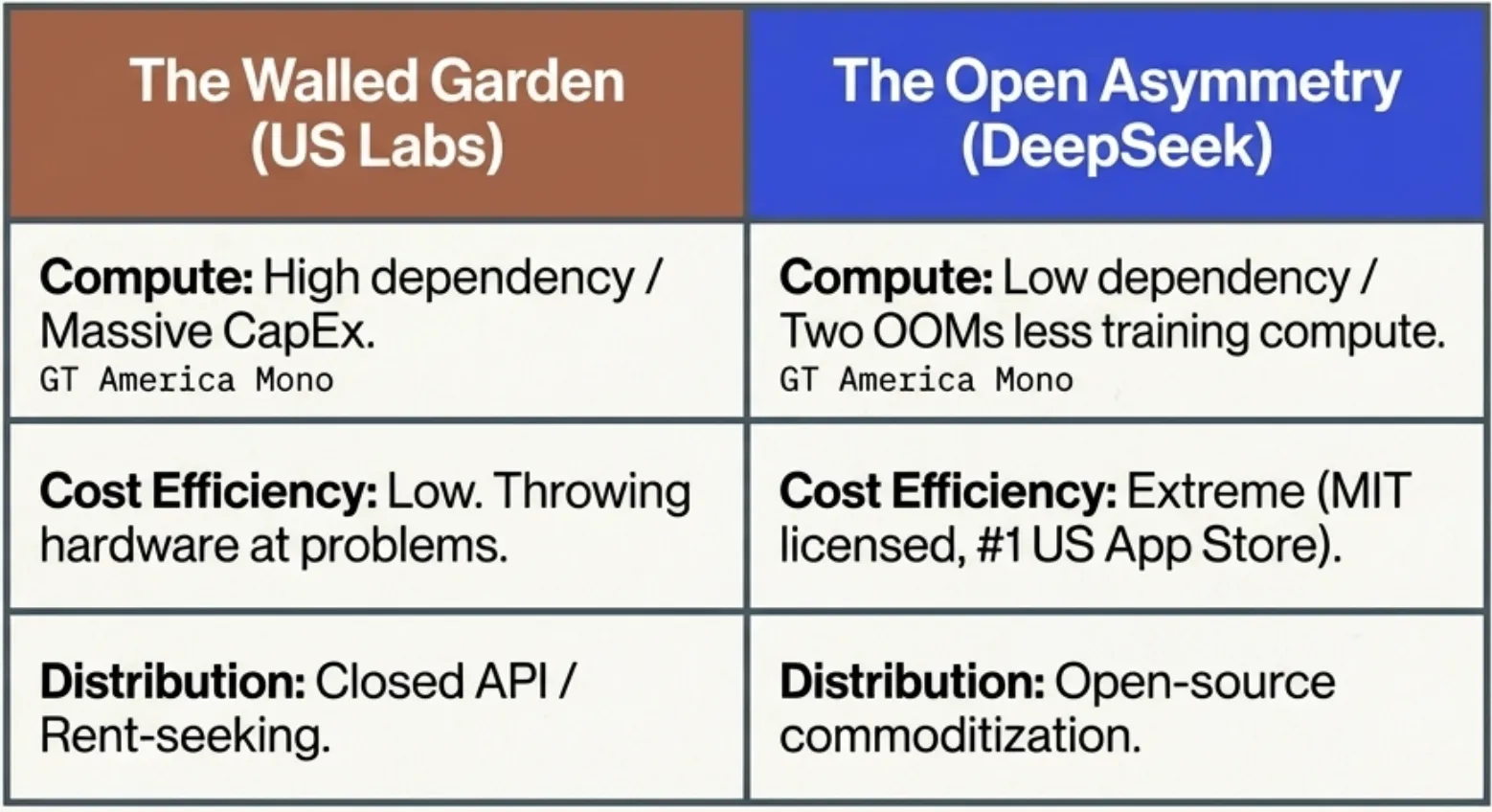

Aschenbrenner's framework rests on the assumption that compute supremacy translates directly to AI supremacy. DeepSeek invalidated this in January 2025.

DeepSeek-R1 matched OpenAI's o1 reasoning performance at a reported training cost of $6 million: roughly two orders of magnitude less than comparable US models. Released under an MIT license, it became the number-one free app on the US App Store. By December 2025, DeepSeek-V3.2-Speciale reportedly approached Gemini 3 Pro in complex reasoning. Aschenbrenner devoted an entire chapter to locking down algorithmic secrets, but the most consequential algorithmic innovation of the past two years came from outside the American labs he wanted to fortify.

The bottleneck is never where you expect it. Throwing hardware at a software problem usually just creates a more expensive version of the same problem.

The crypto parallel nobody's drawing

In 2017, crypto had its own Situational Awareness moment. Total market capitalization went from $17 billion to $830 billion in a single year. Smart people wrote serious pieces arguing decentralized finance would restructure the global financial system within five years. They were directionally right. DeFi did emerge. Stablecoins became critical infrastructure. The timeline was off by five to seven years. Most predictions about which architectures would win were wrong.

The investment is real and mostly rational, but the pace doesn't match the pace at which value can be captured. Morgan Stanley estimates the Big Five will spend roughly $3 trillion on AI infrastructure through 2028, with cash flows covering only half. Even Sam Altman acknowledged "an AI bubble". When the builders start acknowledging the froth, pay attention.

Capabilities keep improving. Economic integration stays slow. Lightning Network was technically functional in 2018 but didn't achieve meaningful adoption for years. AI coding tools work today. But organizational transformation (management restructuring, workflow redesign, liability frameworks) moves at institutional speed, not model-release speed. The contrarian bet is usually on the timeline, not the thesis. Every crypto winter produced people who concluded the thesis was wrong because the timeline was wrong. They sold at the bottom.

The crypto parallel is imperfect: that matters

The comparison breaks in a specific way I should be honest about. Crypto was a financial instrument that needed regulatory and institutional adoption to reach product-market fit. AI is a productivity tool with immediate individual utility. The adoption curve for something that makes your existing job easier looks nothing like the curve for something that demands restructured financial relationships. AI tools are already deployed at scale in ways crypto took a decade to achieve. My timeline skepticism could be the kind of pattern-matching that over-indexes on the last war.

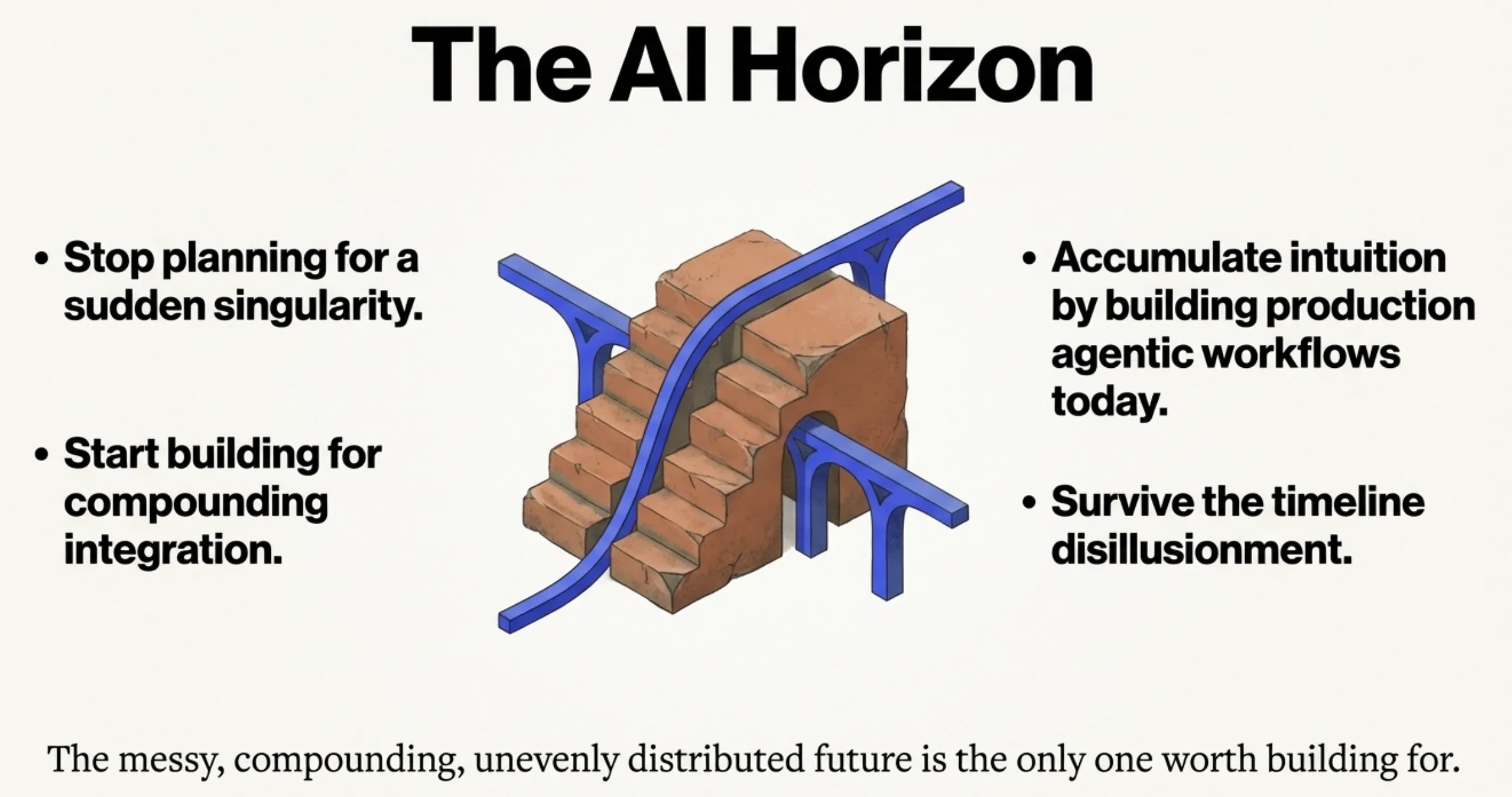

There's also a lazier version of this argument that just says "the truth is in the middle". That's not what I'm claiming. Aschenbrenner's core point, that we are building something genuinely transformative with inadequate institutional response, is not a claim I'm moderating. I'm arguing the mechanism looks more like electrification (decades of compounding integration) than nuclear fission (a discrete explosion). Being wrong about the shape of change can be just as costly as being wrong about the direction. If you plan for a sudden discontinuity and instead get a long ramp, your resource allocation will be wrong in ways that compound every quarter.

Build for the messy, gradual world

AI coding tools already change the team-size math, but through coordination costs, not headcount elimination. A strong engineer with good tooling produces two to three times the output. Smaller teams can take larger scope. The number of teams you need might not shrink.

The agentic transition is the real inflection. Model Context Protocol, Agent2Agent, the ecosystem of agentic frameworks: this is the plumbing that determines how software gets built next. If you're not building production agentic workflows now, you're racking up intuition debt that compounds.

Aschenbrenner published his essay and then started a hedge fund. By Q4 2025, he'd exited Nvidia and was buying bitcoin mining stocks and power generation companies. His revealed preferences tell you more than his predictions. He bet on infrastructure and energy, not on AGI-by-2027 being precise. The messy, compounding, unevenly distributed future is the one worth building for. Not the one in the essay.