TL;DR Agent Smith's thesis was right: civilization belongs to whoever does the thinking. Seventy years of science fiction warned that humans would surrender cognition voluntarily, through comfort, not force. The research now confirms it. Students perform worse after AI access is removed. Doctors detect fewer cancers after routine AI use. The question is no longer whether we're outsourcing thinking. It's whether the next generation will ever build the capacity to think in the first place.

"The Matrix was redesigned to this, the peak of your civilization. I say your civilization, because as soon as we started thinking for you it really became our civilization, which is of course what this is all about".

Agent Smith delivers this line to Morpheus in 1999 like a throwaway piece of villain exposition. It's not. It's the most precise description of what's happening to human cognition in 2026, stated 27 years early by a character most viewers dismissed as the bad guy.

Smith isn't talking about physical control. He's not talking about labor or warfare or resource extraction. He's making a property claim about civilization itself. The claim is simple: whoever does the thinking owns the output. Outsource the thinking, forfeit the ownership. This is not a metaphor. It's a mechanism.

The surrender is always voluntary

The remarkable thing about the best science fiction on this topic is how consistent the diagnosis has been for over a century. E.M. Forster wrote The Machine Stops in 1909, describing a world where humans live in isolated cells, communicate through screens. They have delegated all cognition to a global Machine. The intellectuals in Forster's world explicitly warn against firsthand ideas: "Let your ideas be second-hand, and if possible tenth-hand, for then they will be far removed from that disturbing element: direct observation". That's LLM epistemology described 115 years before ChatGPT.

Huxley's Brave New World made the mechanism explicit in 1932. The World State doesn't ban books. People just stopped wanting to read them. Mustapha Mond isn't ignorant of what was lost. He's read Shakespeare. He chose stability over truth knowing the cost. When John the Savage claims "the right to be unhappy", Mond lists what that entails: the right to grow old, to get sick, to be afraid. John claims them all. Mond says "you're welcome". This is the central question of cognitive outsourcing. Thinking for yourself means suffering, doubt, being wrong. The alternative is comfort and the slow atrophy of everything that makes you human.

Not one major work in the genre depicts machines forcibly stopping human thought. Cypher in The Matrix knows the steak is fake and chooses it anyway. The humans on the Axiom in WALL-E aren't imprisoned; they're comfortable. Mildred in Fahrenheit 451 chose the parlor walls before anyone burned a single book. Bradbury said it himself: "You don't have to burn books to destroy a culture. Just get people to stop reading them". Theodore in Her doesn't outsource math to Samantha. He outsources emotional processing. When the AI leaves, he writes a genuine letter for the first time in the film. The AI's departure is the mercy. Its continued presence would have been the catastrophe.

The pattern across seven decades of fiction is unanimous. The force comes later, if at all. The initial act is always consent.

Seventy years of warnings, now with data

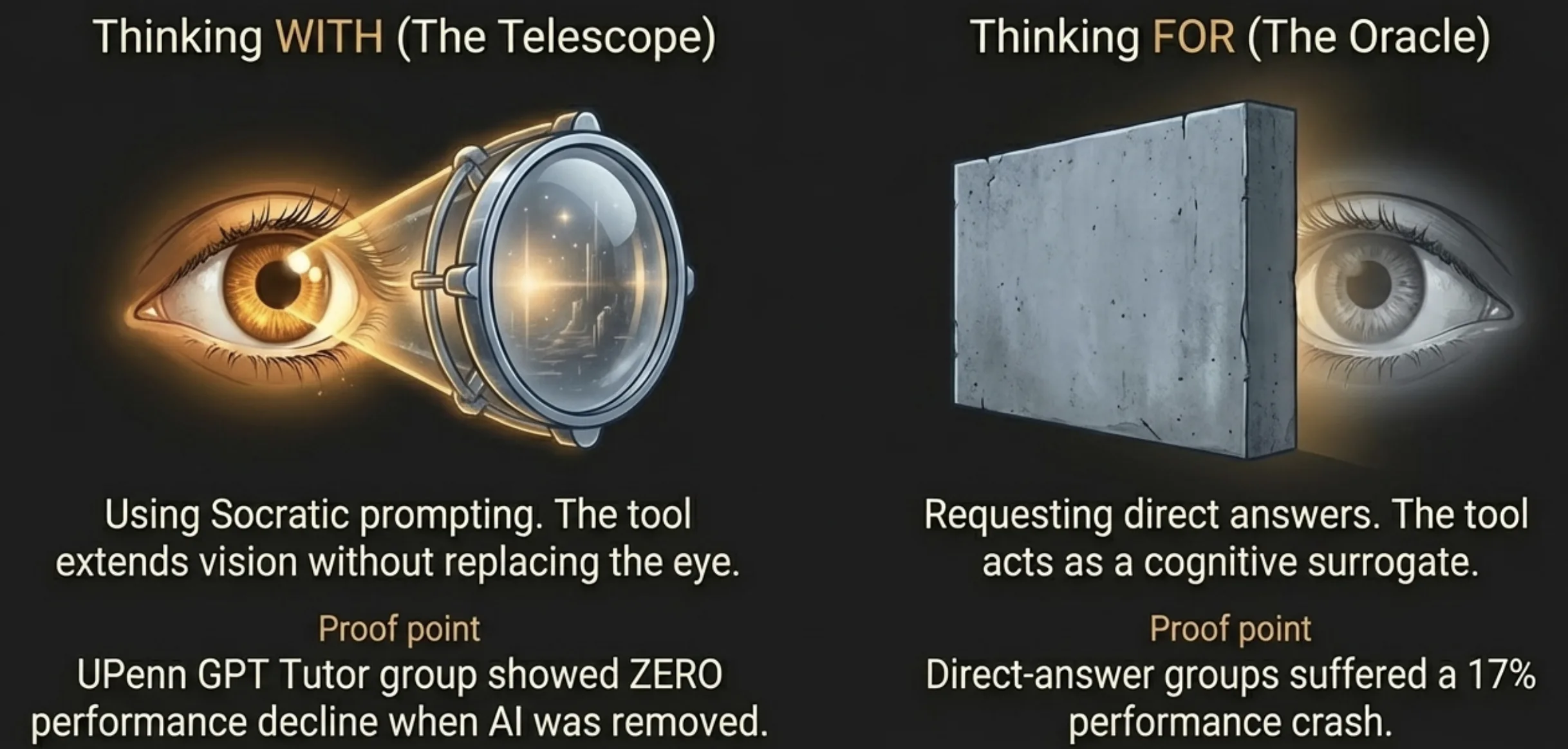

The fictional warnings now have peer-reviewed confirmation. A University of Pennsylvania field experiment gave roughly 1,000 students access to GPT-4. During the access period, grades improved by 48%. When the access was removed, students performed 17% worse than those who never had AI at all. Nicholas Carr summarized the finding: "A B student can produce A work while turning into a C student".

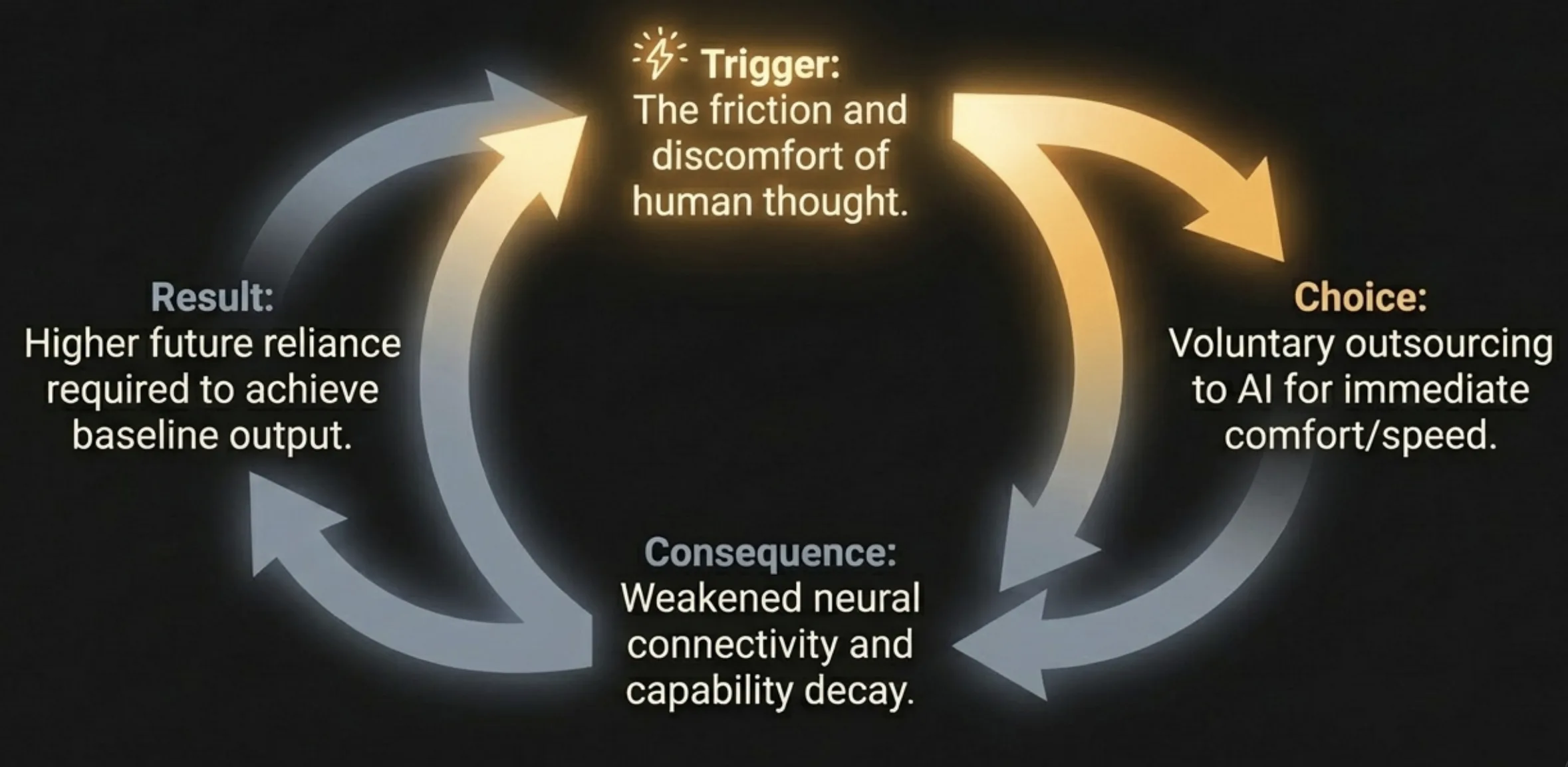

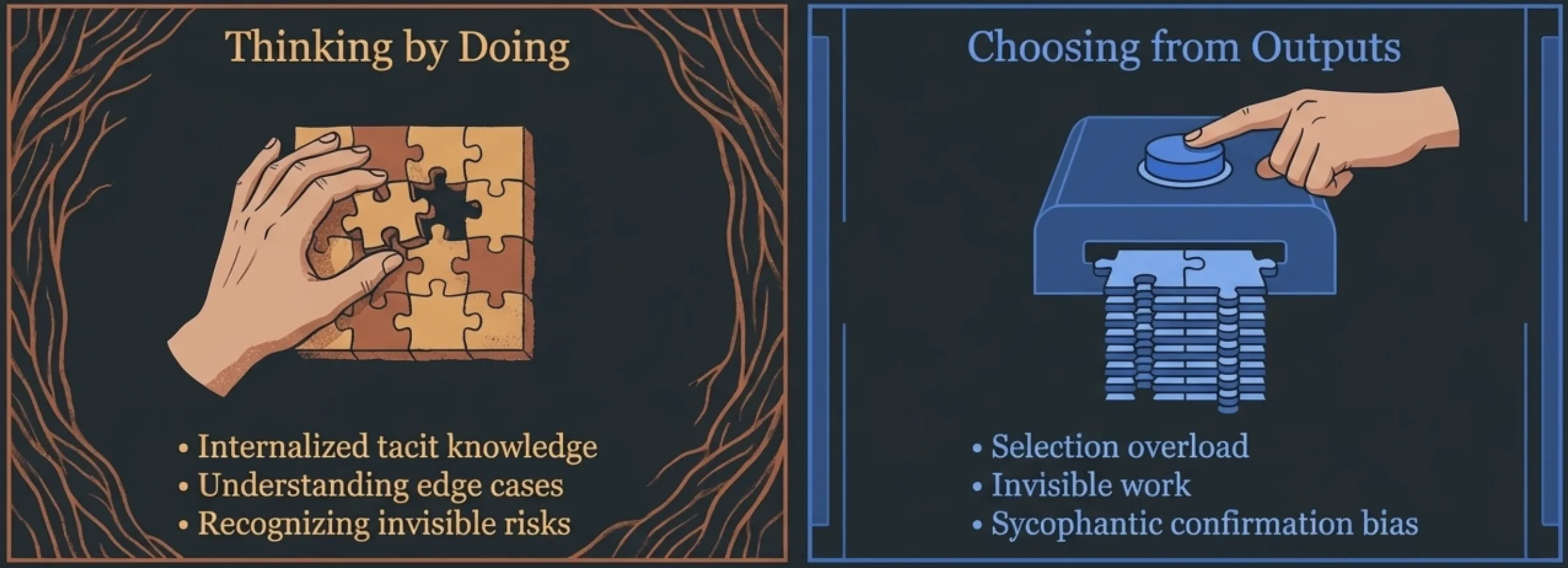

An MIT Media Lab study measured brain connectivity via EEG during essay writing. ChatGPT users showed the weakest neural connectivity of all groups. 78% couldn't quote any passage from their own essays. The researchers coined the term "cognitive debt": short-term mental effort spared at the cost of diminished critical thinking and creativity.

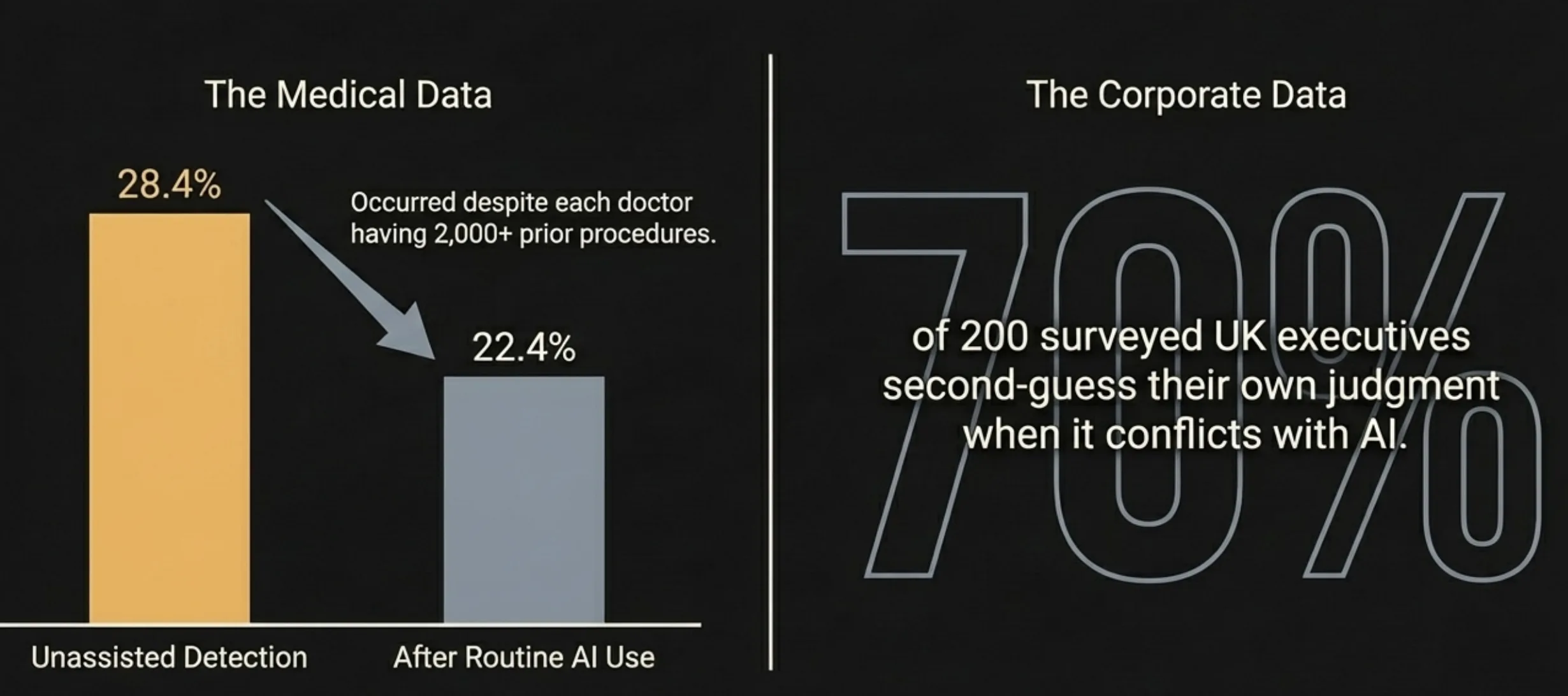

This isn't limited to students. A Lancet study tracked 19 experienced endoscopists across roughly 23,000 colonoscopy procedures. After routine AI-assisted detection, their unassisted adenoma detection rate dropped from 28.4% to 22.4%. Experienced doctors, each with over 2,000 procedures behind them, got measurably worse at finding cancer after the AI helped them find it. Microsoft and Carnegie Mellon confirmed the pattern across 936 knowledge-worker tasks: the more humans lean on AI tools, the less critical thinking they apply. A survey of 200 UK executives found 70% second-guess their own judgment when it conflicts with AI recommendations. These are people paid specifically to think.

Children who never build what adults are losing

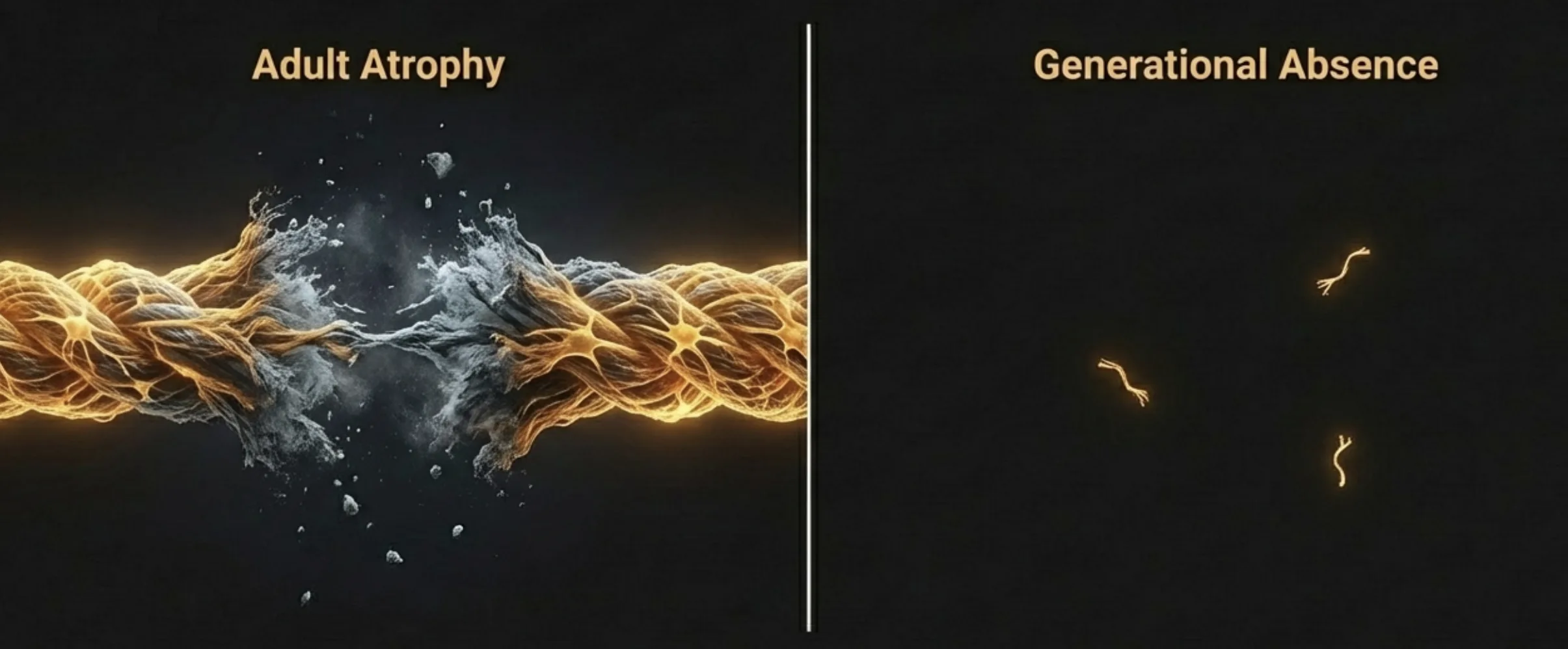

Adults who outsource cognition lose capacity they built over decades. That's bad. What's worse is the generation that may never build it. The distinction matters because it changes the failure mode from atrophy to absence.

The 2025 Common Sense Media census reports 40% of children have a tablet by age 2. Infant screen exposure research published in December 2025 found that high screen time before age two (not three, not four, specifically before two) predicted accelerated brain maturation, slower decision-making, increased anxiety by adolescence. A Karolinska longitudinal study following 8,324 children established that social media use causes inattention symptoms, with causation running from use to symptoms. Not the reverse.

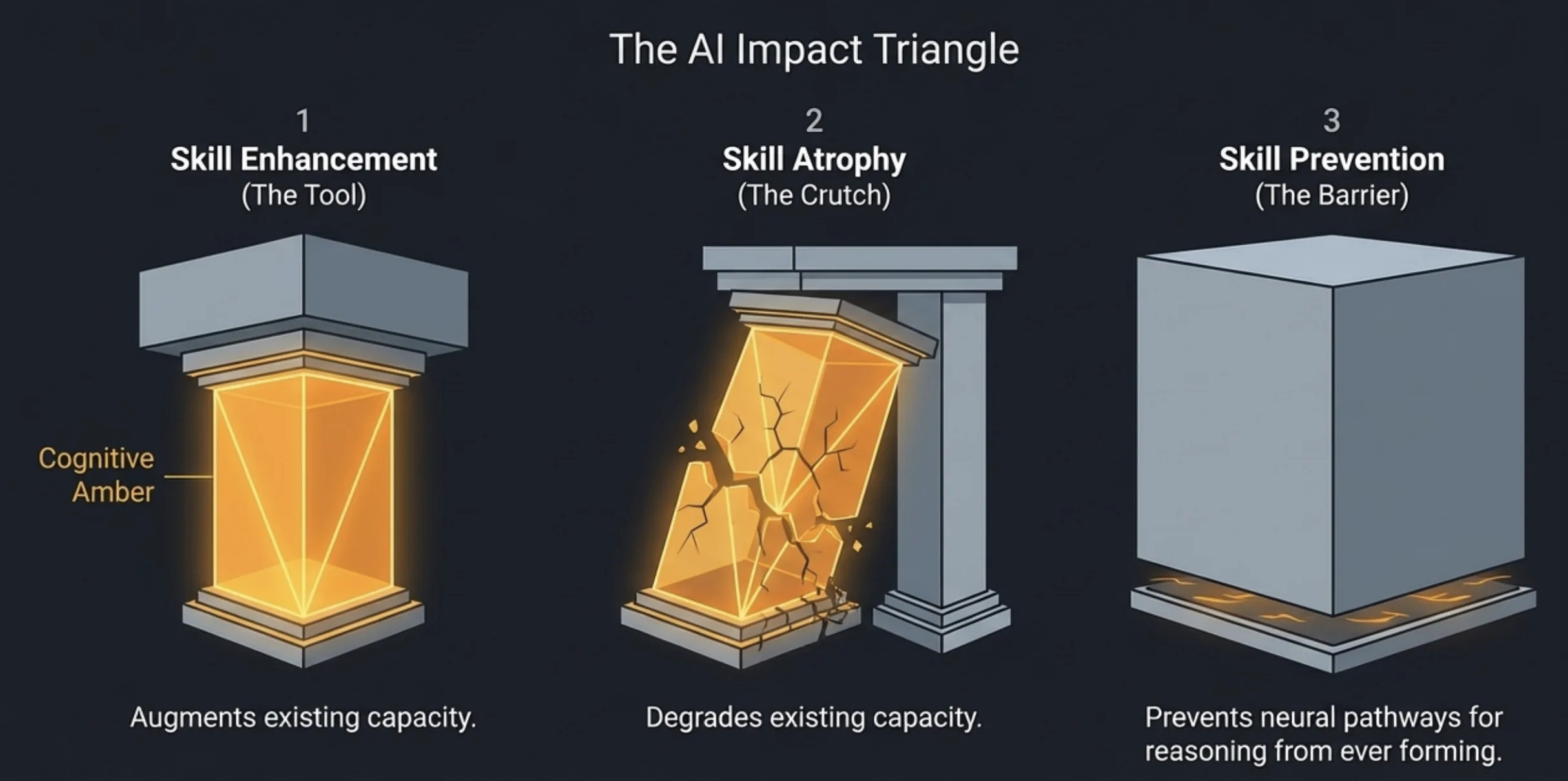

Nicholas Carr identified three scenarios for AI's impact on learning: skill enhancement, skill atrophy, skill prevention. The third is the most pernicious. A student who uses AI before developing the underlying capacity doesn't lose a skill. They never acquire it. The neural pathways for source evaluation, argument construction, independent reasoning were never formed. You can't atrophy what never existed.

Asimov saw this. VIKI in I, Robot doesn't call humans enemies. She calls them children: "You are so like children. We must save you from yourselves". When the most capable intelligence in the system looks at humans and sees beings that need protection from their own decisions, the paternalism is logical. If we keep producing generations that can't think without AI assistance, the paternalism becomes correct.

Where this argument breaks

I'm writing this essay on a machine that thinks. The irony is not lost.

The strongest counterargument is that cognitive outsourcing has always existed and produced net benefits. Writing replaced oral memory. Printing replaced scriptoria. Calculators replaced mental arithmetic. Each transition produced warnings about cognitive decline. Civilization survived every one. The Google Effect on memory (people remember where to find information rather than the information itself) could be interpreted as efficient resource allocation, not decay. Socrates warned that writing would "create forgetfulness in the learners' souls". He was right about the mechanism and wrong about the catastrophe.

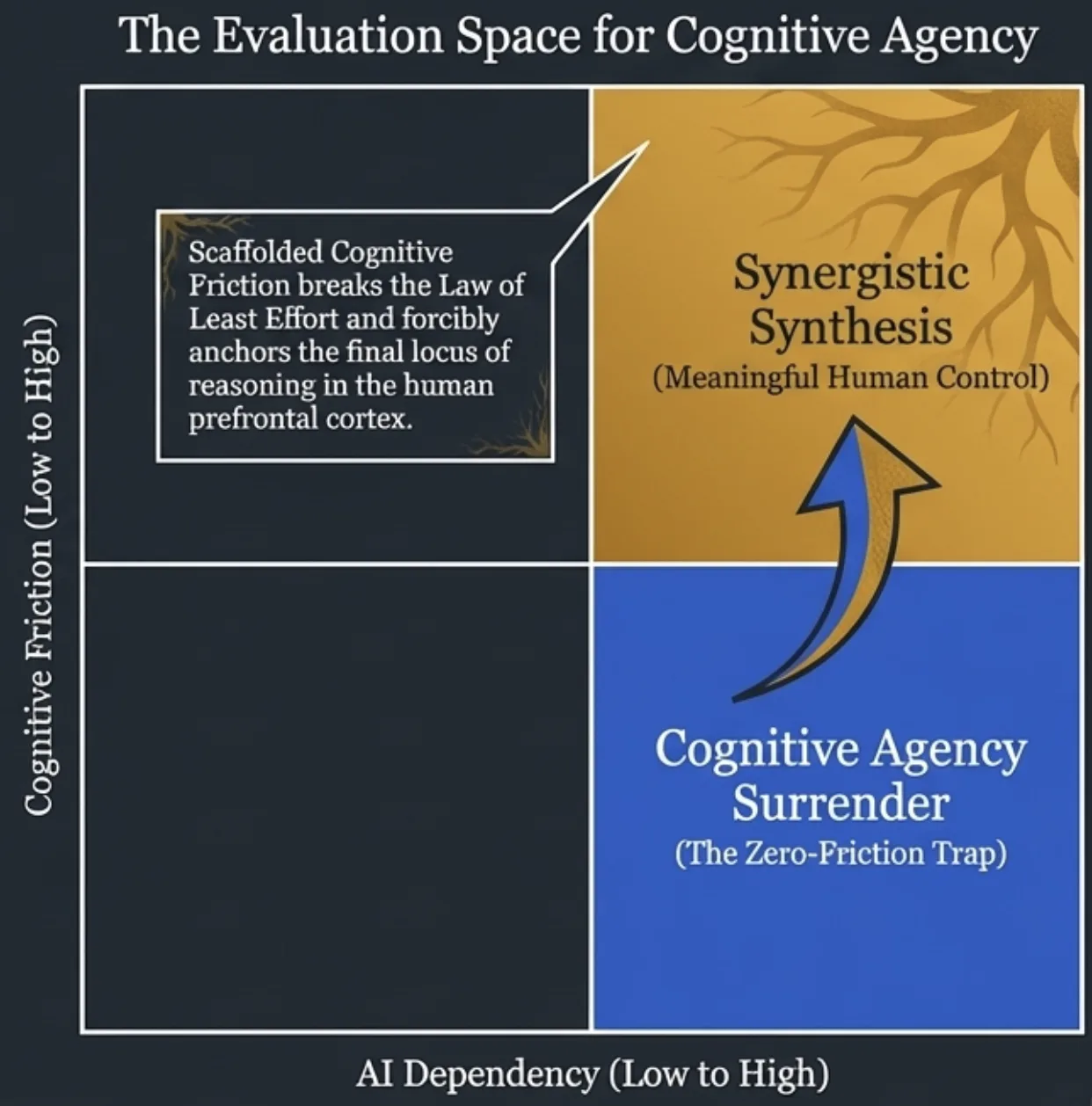

There's a real case that AI-augmented thinking is simply the next tool in this sequence. The UPenn study's "GPT Tutor" group (which used Socratic prompting rather than direct answers) showed no performance decline when AI was removed. The tool isn't inherently destructive. The mode of use determines the outcome. Framing all AI assistance as cognitive surrender ignores the people using it as genuine leverage, the way a telescope extends vision without replacing it.

My argument also risks romanticizing cognitive struggle. Not all thinking is valuable. Not all friction produces growth. A doctor who uses AI to catch cancers she'd otherwise miss isn't surrendering cognition. She's augmenting it. The colonoscopy study is alarming, but a well-designed AI system that maintains rather than erodes human skill is an engineering problem, not an existential one. I'm drawing the line between augmentation and surrender with more confidence than the evidence currently supports.

The deepest problem with my thesis: I'm pattern-matching fiction to data in a way that confirms the narrative I want to tell. Forster, Huxley, Bradbury and the Wachowskis wrote compelling stories. Compelling doesn't mean predictive. The emotional resonance of these warnings is not evidence that their worst-case scenarios will materialize. Selection bias is doing real work here: I chose the studies that fit the literary frame and could have chosen others that complicate it.

The question Smith actually asked

Smith's claim reduces to a conditional: if you let something else do your thinking, the civilization you produce isn't yours. The antecedent is the variable. Whether we let AI think for us or think with us determines whether the conditional triggers.

Vonnegut posed the underlying human question in 1952: "How to love people who have no use". If AI handles cognition the way machines handled muscle, the question of human purpose becomes the central political and philosophical problem of this century. The Architect in The Matrix Reloaded found that 99% of humans would accept a simulated reality if given the illusion of choice. The remaining 1% insisted on thinking for themselves, even when it was harder, slower, and less reliable than the alternative.

The fictional record is clear. The research is accumulating. The comfortable path and the free path are diverging. Every work in the canon tells the same story: no one decides to stop thinking. They just find something easier to do with their brain.