TL;DR A recent essay argues that AI can replace organizational hierarchy with a continuously updated model of the business, an intelligence layer that composes capabilities into solutions. Humans provide judgment at the edge. The vision is directionally right. Most companies will fail because they skip the prerequisite: building the proprietary understanding that makes a world model worth having. Here's what the framework gets right, what it takes to build. Here's where to start.

A recent essay proposes replacing organizational hierarchy with three AI-driven systems: a continuously updated model of the business, a customer model built from proprietary data. An intelligence layer that composes atomic capabilities into solutions for specific customers at specific moments. Humans move to "the edge", where intelligence contacts reality and judgment is required. The vision is directionally right. Implementation is where it gets interesting.

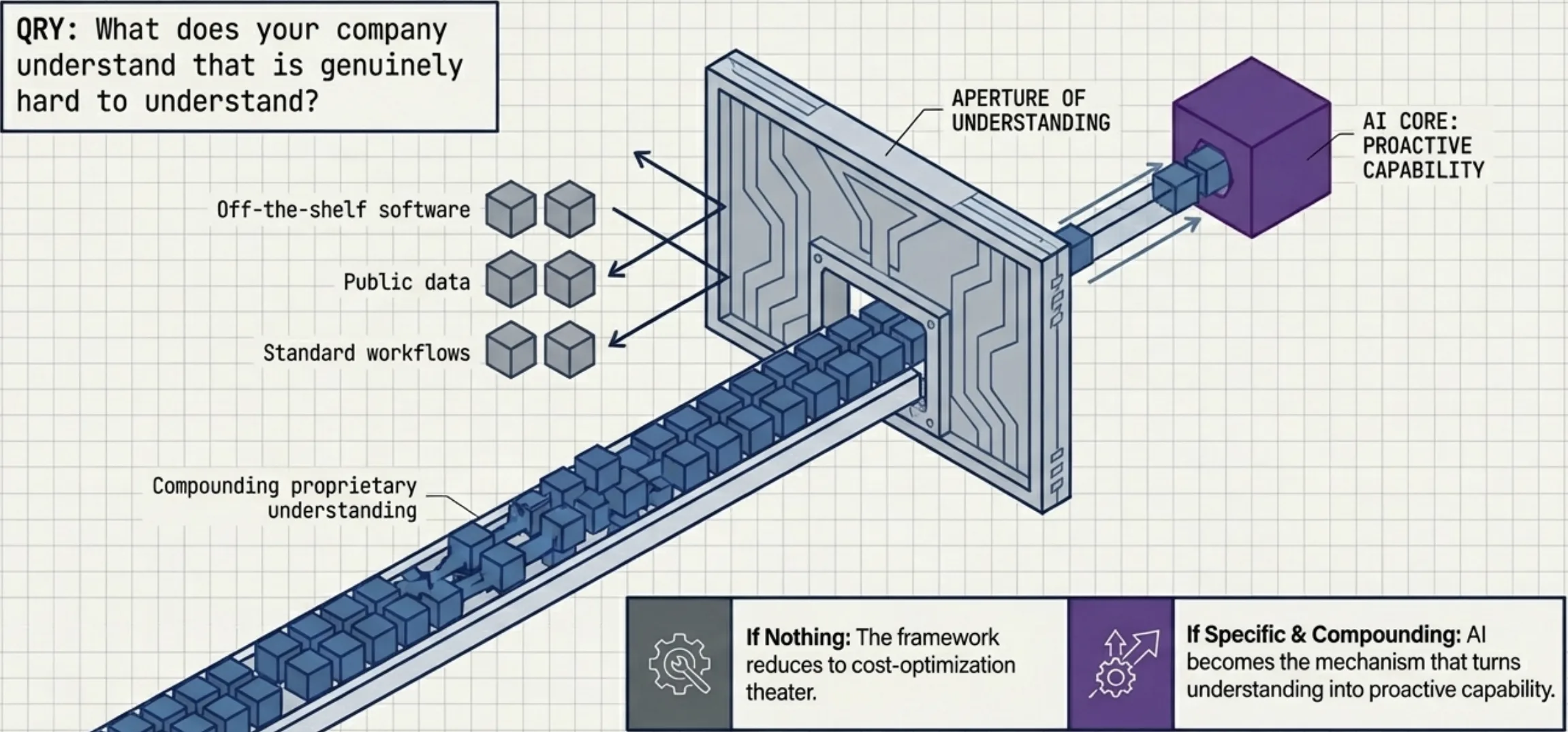

The essay's most important contribution isn't the architecture. It's a question: what does your company understand that is genuinely hard to understand? Is that understanding getting deeper every day? If nothing, AI becomes cost-optimization theater. If the answer is specific and compounding, AI becomes the mechanism that turns proprietary understanding into proactive capability. Everything else in the framework depends on the answer.

I wrote about the coordination math in The coordination tax. Small teams, big missions. This essay goes further. It proposes what replaces coordination after you remove the coordinators.

Building the world model is the prerequisite

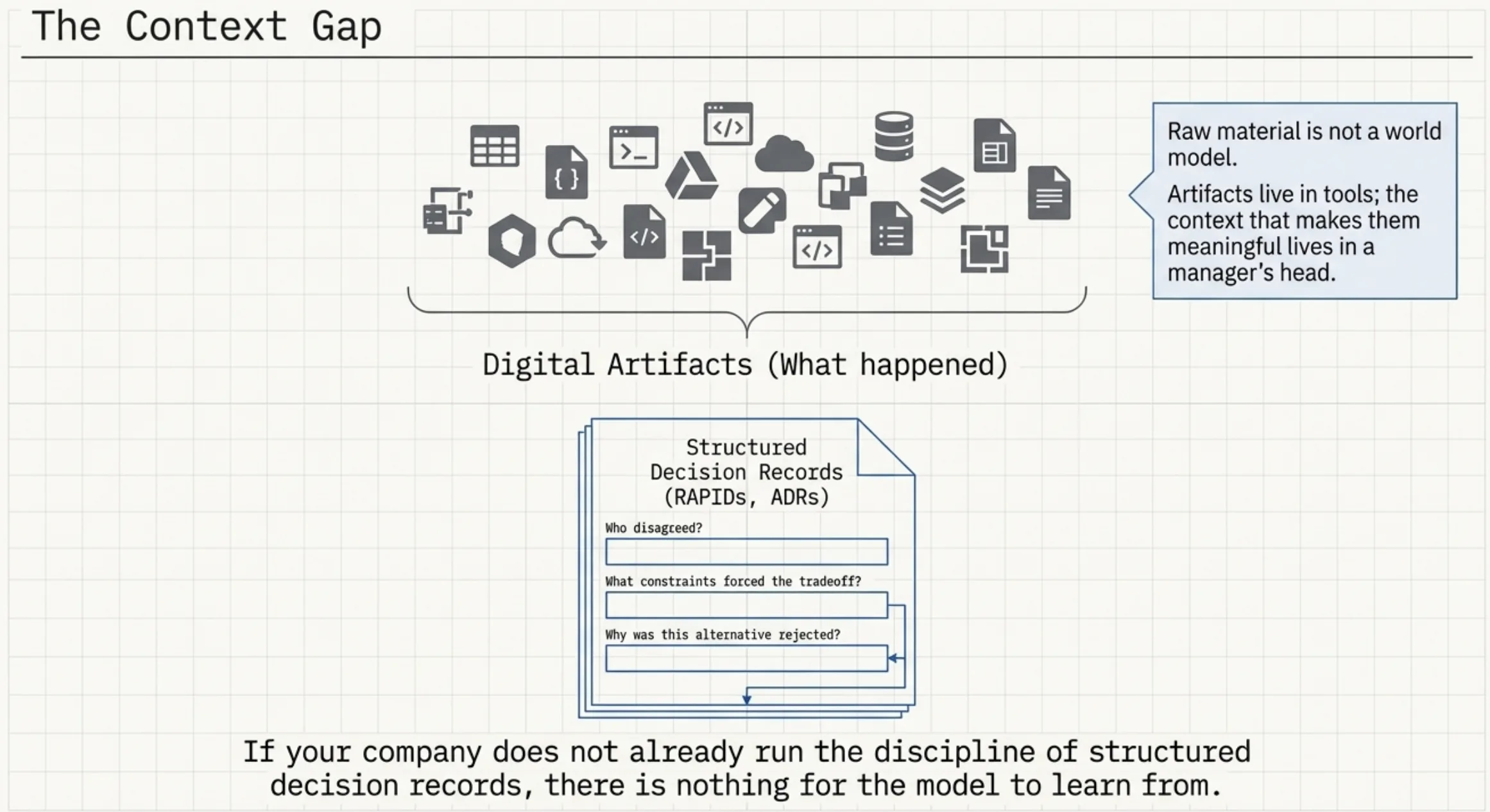

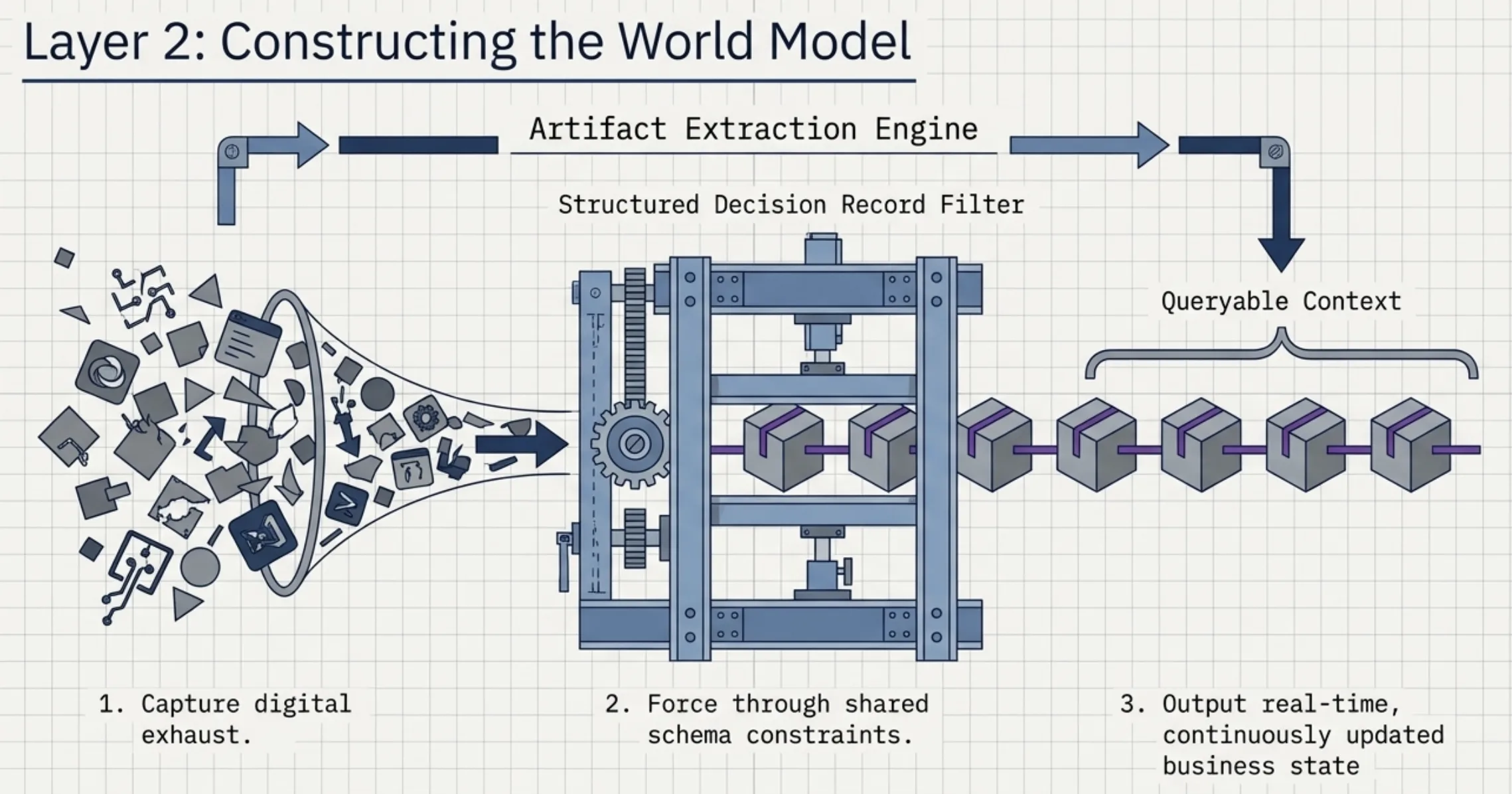

The world model concept is straightforward: everything your company does creates digital artifacts. Decisions, code, designs, plans, progress. AI builds and maintains a real-time picture from those artifacts, replacing the manager's traditional function of knowing what's happening and relaying context up and down the chain.

In a remote-first company where work already lives in tools (Linear, GitHub, Figma, Slack), the raw material exists. But raw material is not a world model. Most companies' data is fragmented across dozens of systems with no shared schema. The context that makes artifacts meaningful (why a decision was made, what constraint forced a tradeoff, who disagreed and why) lives in someone's head, not in any tool. Structured decision records close this gap: documents that capture who had input, what alternatives were rejected, what constraints drove the choice. Companies that already run this discipline (RAPIDs, architecture decision records, strategic planning documents) have decision context that's queryable. Companies that don't have nothing for the model to learn from.

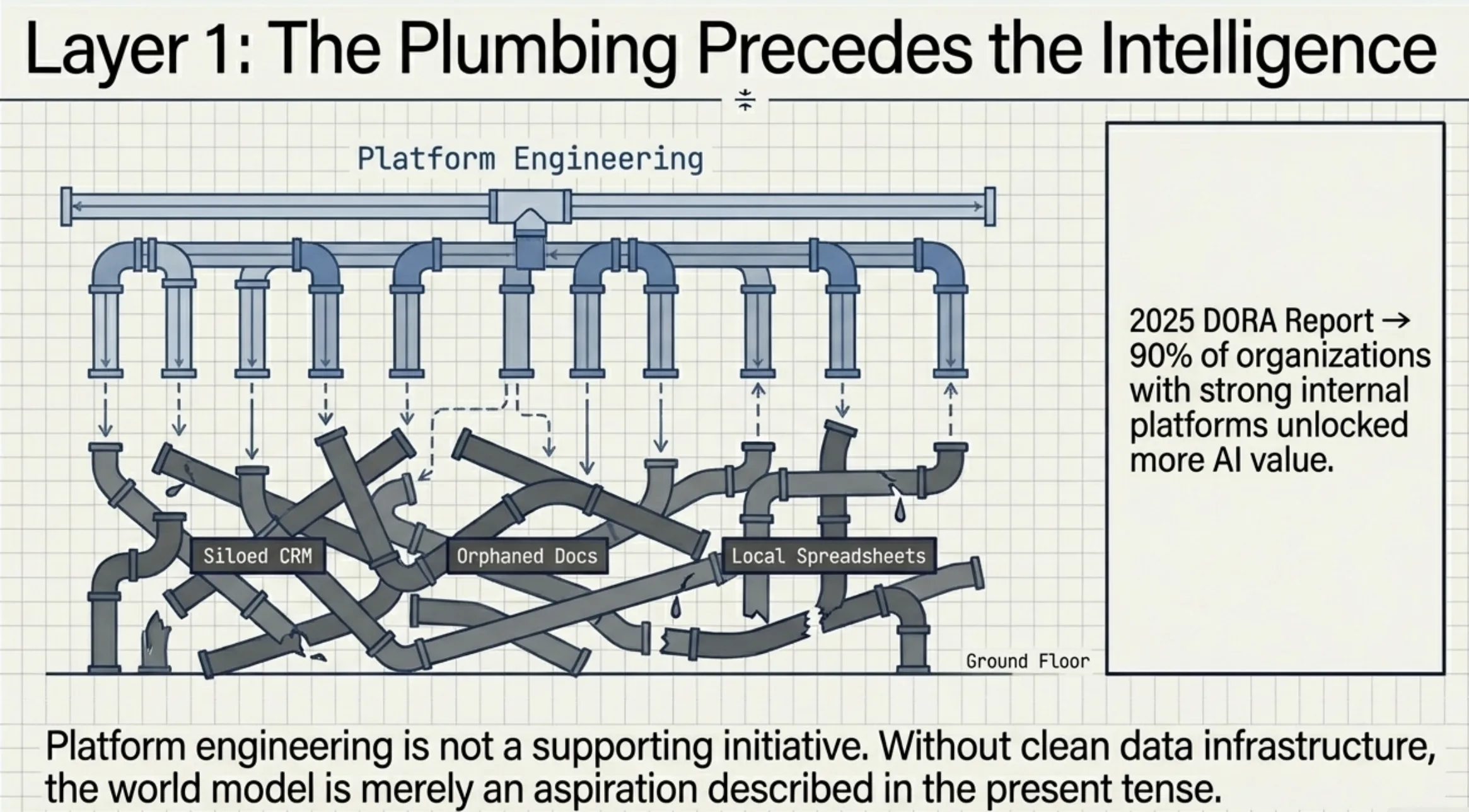

The 2025 DORA report found that 90% of organizations with strong internal platforms unlocked more AI value than those without. Platform engineering isn't a supporting initiative for AI transformation. It's the foundation. Without clean data infrastructure, the world model is an aspiration described in present tense.

Start here: audit what's already machine-readable in your organization. Map the gaps between what your tools capture and what your managers actually know. Those gaps are your implementation backlog. Not the intelligence layer. The plumbing underneath it.

Failure signals are the strongest idea in the essay

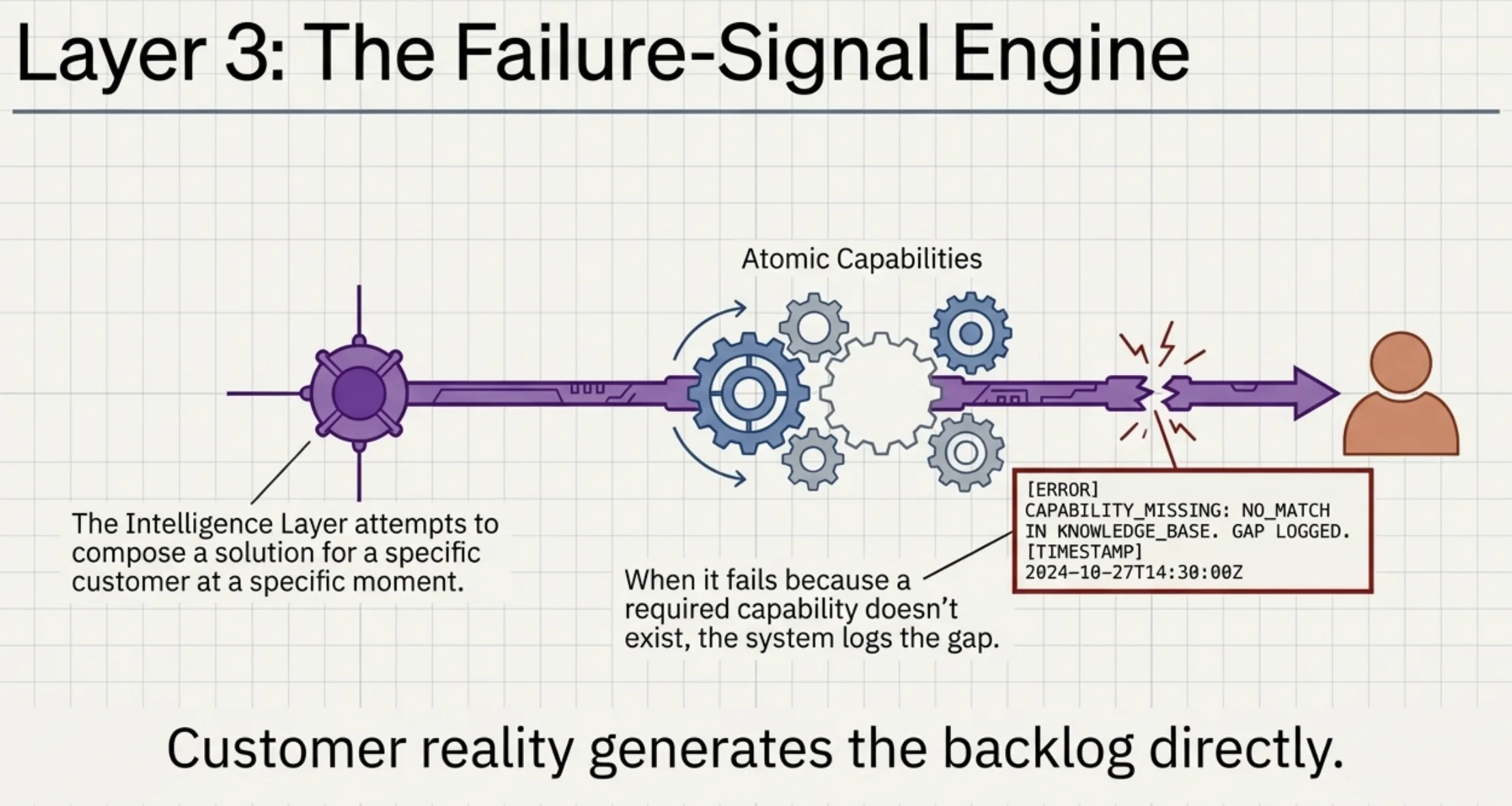

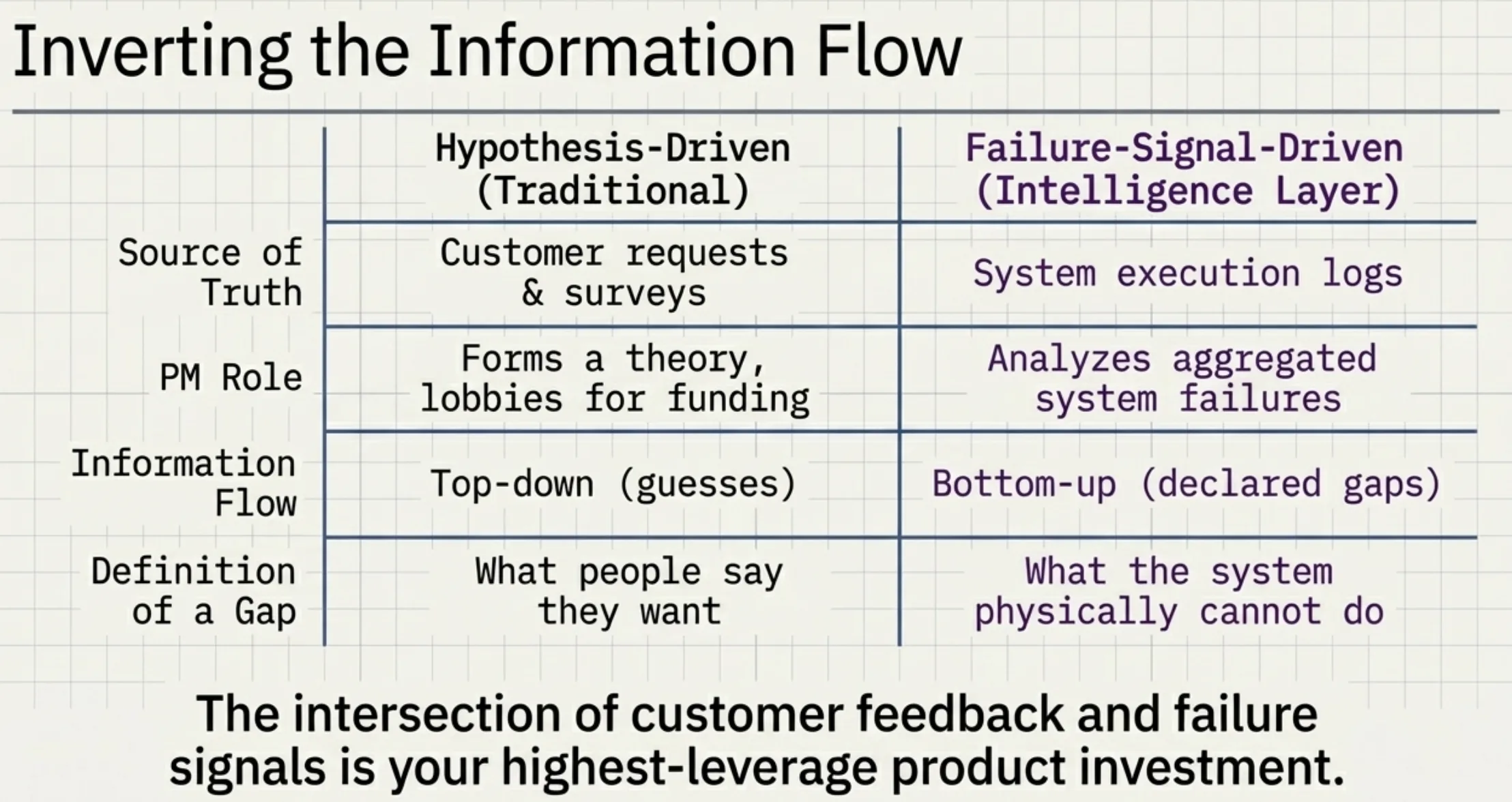

Traditional product roadmaps are hypotheses. A product manager studies data, forms a theory about what to build, convinces someone to fund it. The essay proposes something different: when the intelligence layer tries to compose a solution for a customer and fails because a required capability doesn't exist, that failure becomes the roadmap. Customer reality generates the backlog directly.

This genuinely inverts the information flow from hypothesis-driven to signal-driven product development. No product manager decided what to build. The gap declared itself. Customer feedback tells you what people want. Failure signals tell you what the system can't do. The intersection is your highest-leverage product investment.

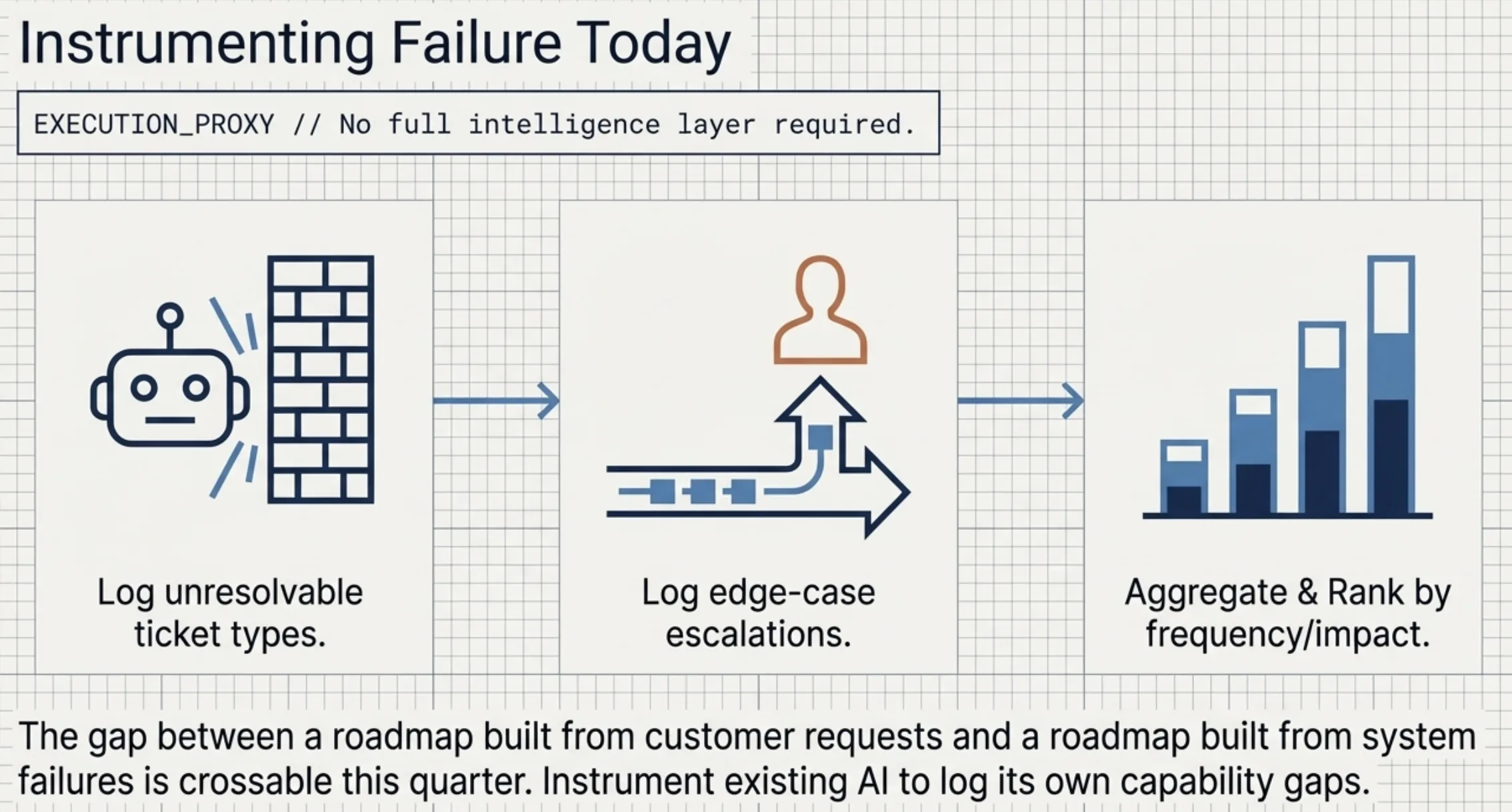

Most companies can start implementing this without a full intelligence layer. Instrument your existing AI systems to log capability gaps. When a support chatbot can't resolve a ticket type, log it. When an automated workflow hits an edge case and escalates to a human, log it. Aggregate the gaps. Rank by frequency and impact. That's your failure-signal roadmap. The gap between "roadmap built from customer requests" and "roadmap built from system failures" is crossable this quarter.

Humans at the edge means something specific

The essay's most provocative inversion: intelligence lives in the system, people operate at the edge. Edge functions: perceiving signals models can't quantify (intuition, cultural context, trust dynamics), making calls models shouldn't (ethical decisions, novel situations), reaching into places the system can't access.

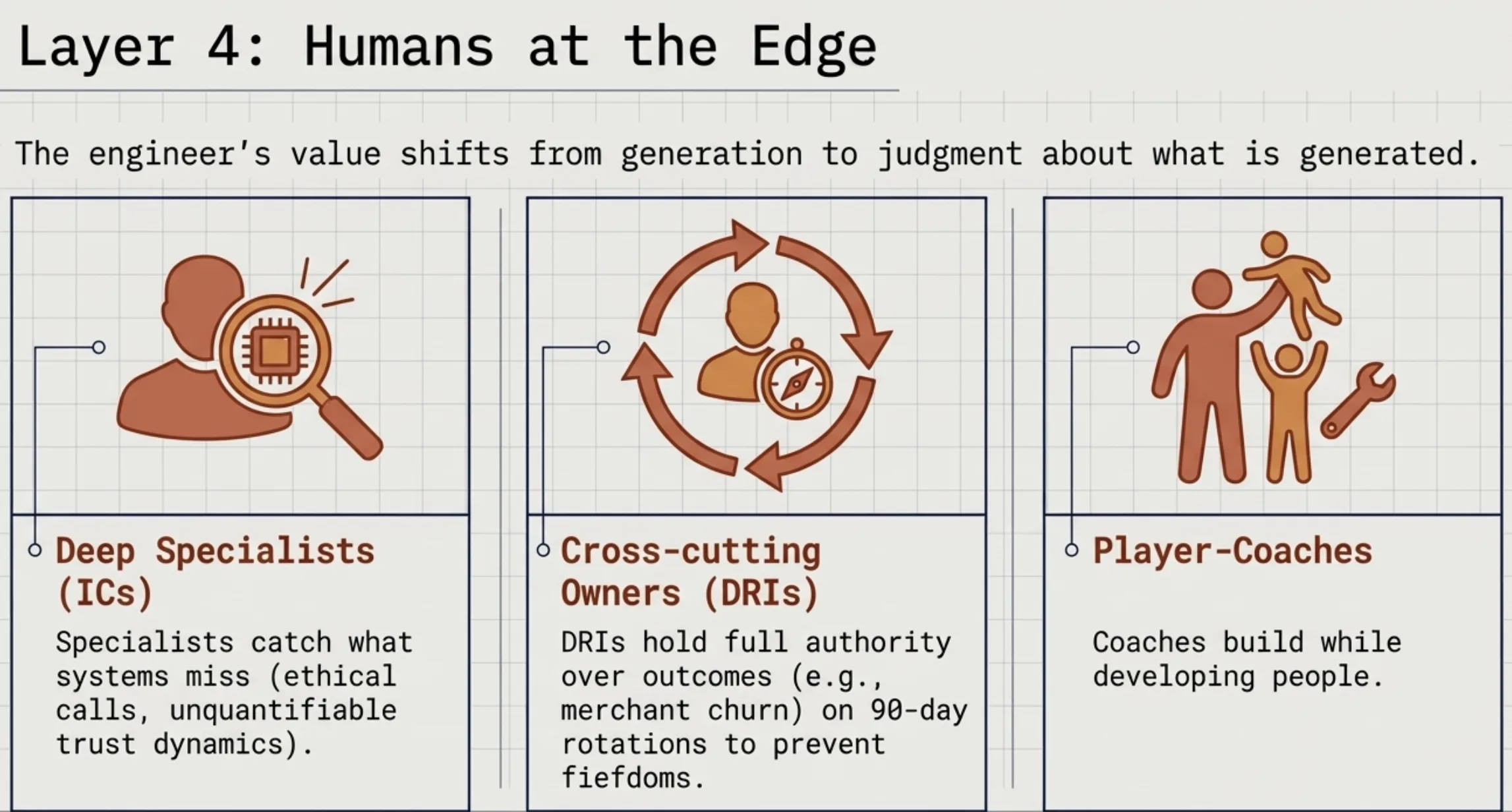

This maps to what I see working in practice. I described in The six walls of Claude Code how the best AI-augmented engineers aren't writing the most code. They're directing, verifying, catching what the system misses. That's edge work. The engineer's value shifts from generation to judgment about what's generated.

The essay proposes three roles for this structure: deep specialists (ICs), cross-cutting problem owners on 90-day rotations (DRIs), player-coaches who build while developing people. The DRI model is worth stealing. Giving someone full authority over a specific outcome (merchant churn in a segment, onboarding completion rate) for a fixed rotation creates ownership without permanent hierarchy. The 90-day window prevents fiefdoms. The rotation builds cross-functional judgment faster than any training program.

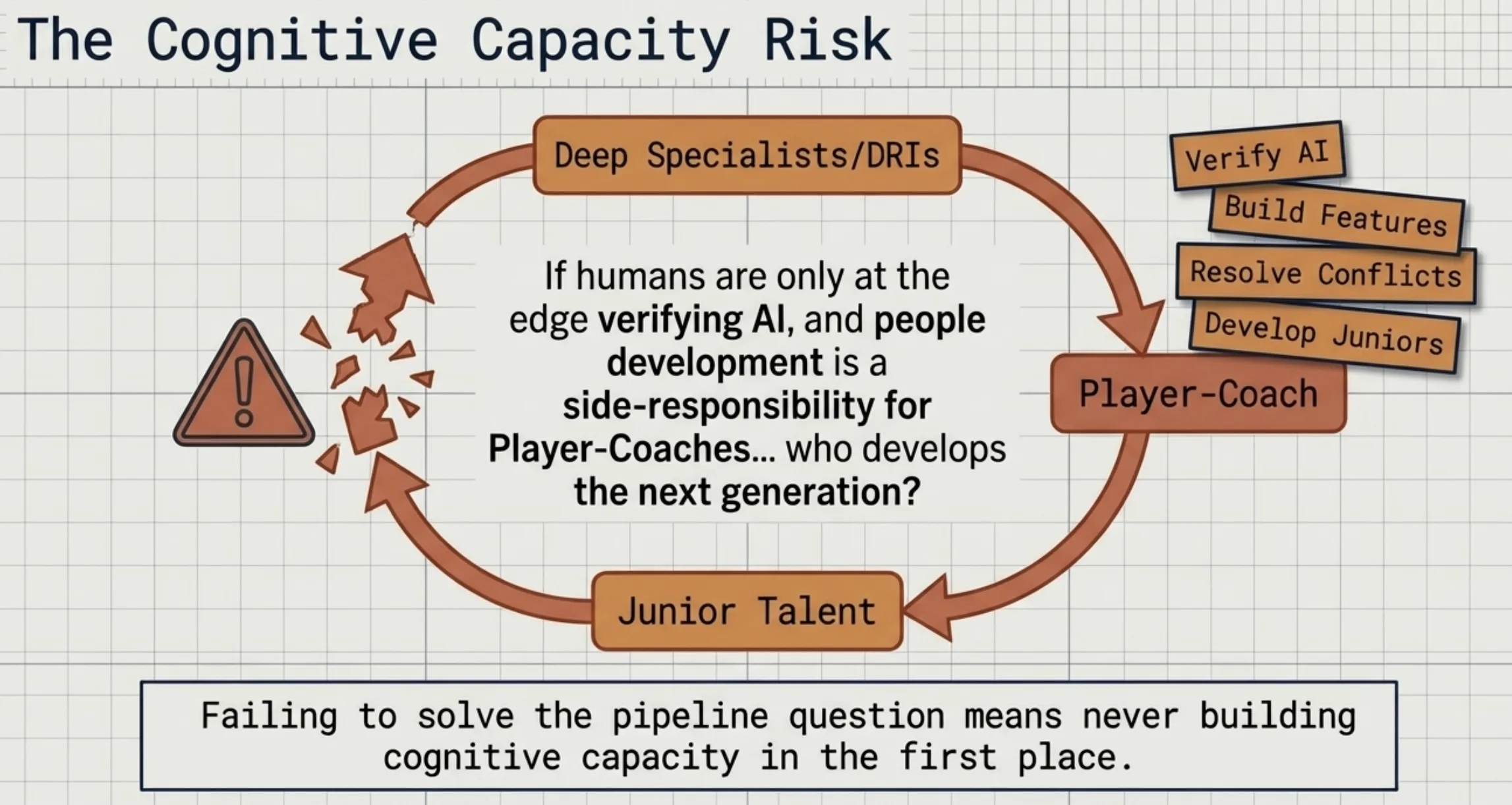

The player-coach model is where implementation gets hard. The essay never explains how someone stays "deep in the work" while developing junior engineers, resolving conflicts, building culture. I wrote in Your civilization about the risk of never building cognitive capacity in the first place. The pipeline question applies: who develops the next generation of ICs and DRIs if people development is a side responsibility rather than someone's primary job?

Where the framework meets reality

The essay's examples depend on a data advantage most companies don't have. Visibility into both sides of financial transactions creates a compounding feedback loop: richer signal, better model, more transactions, richer signal. A SaaS company, a logistics firm, a healthcare provider each needs to identify their own equivalent. The essay claims universal relevance but describes a model built on a rare moat. The first step for any company is answering honestly whether they have one.

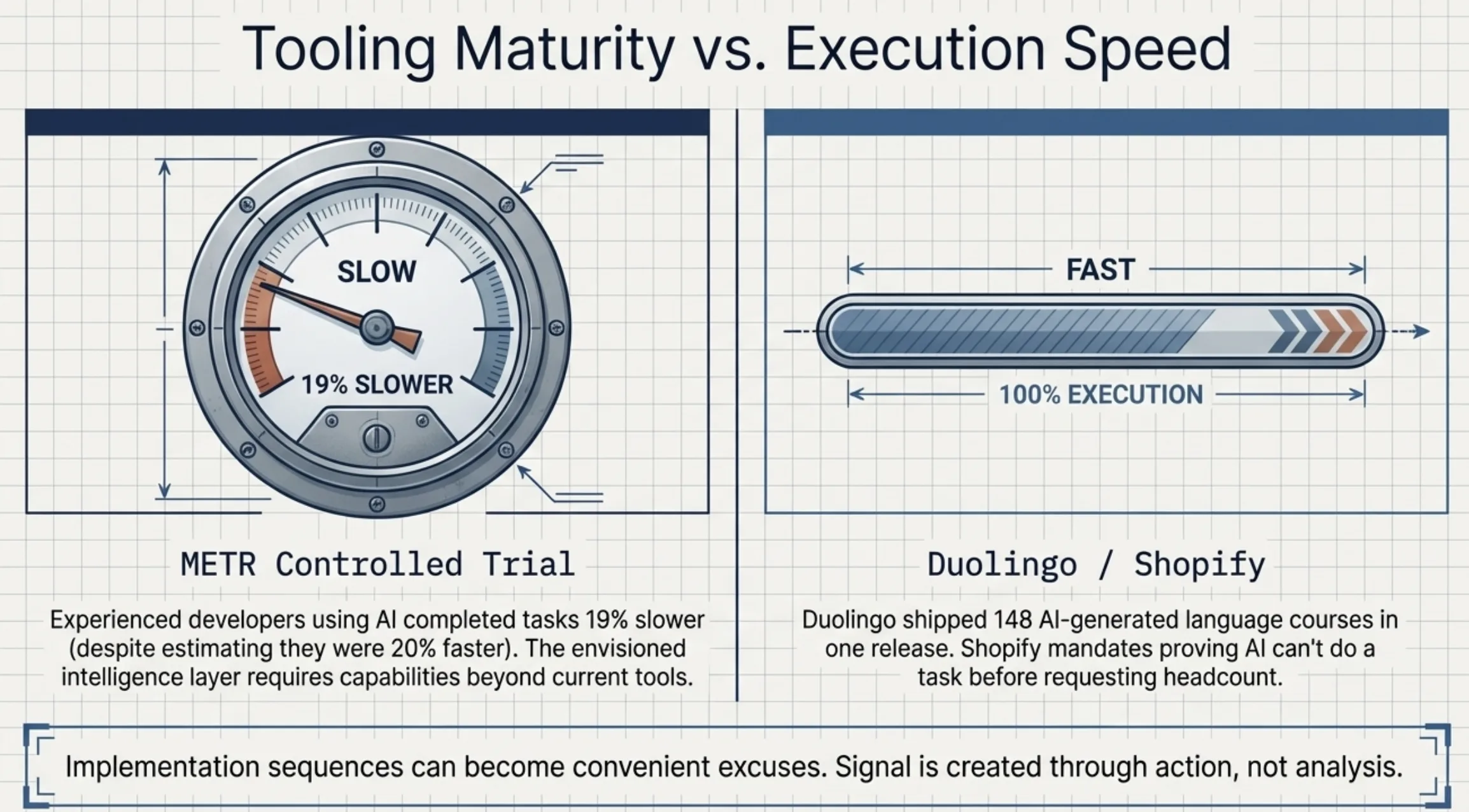

The METR controlled trial suggests the underlying tools are still early. Experienced developers using AI on their own repos completed tasks 19% slower despite estimating they were 20% faster. The intelligence layer the essay envisions requires capabilities beyond what current tools deliver. The vision may be directionally correct and still years from full realization.

I'm probably too focused on prerequisites. Duolingo shipped 148 AI-generated language courses in a single release without waiting for perfect infrastructure. Shopify's mandate to prove AI can't do a task before requesting headcount creates signal through action, not analysis. My implementation sequence might be a convenient excuse for organizations that need to just start building.

The question that determines everything

Come back to the essay's core question: what does your company understand that is genuinely hard to understand?

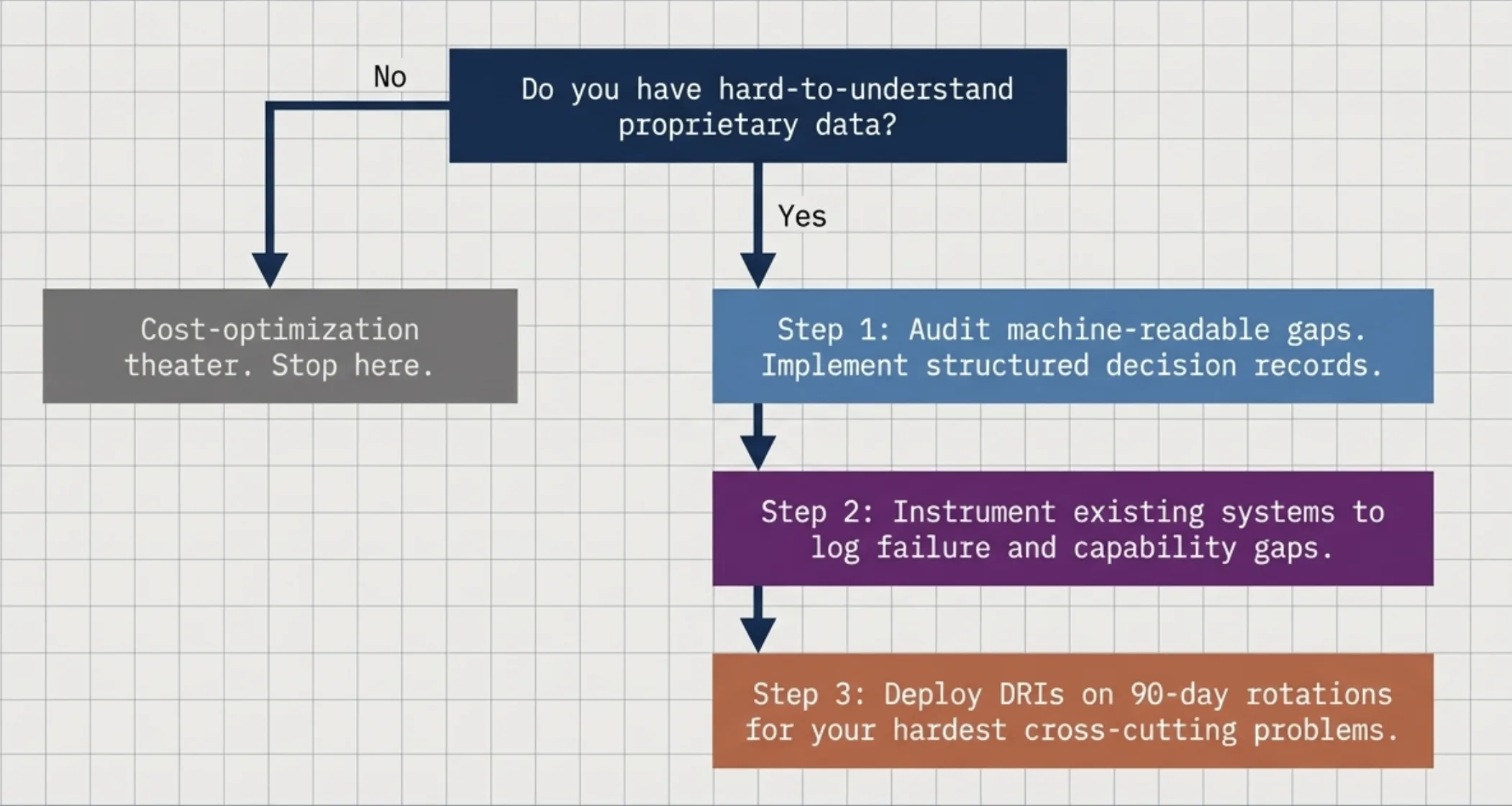

If you can answer it, the path is clear. Build the data infrastructure that makes your understanding machine-readable. Instrument your systems for failure signals. Start a 90-day DRI rotation on your hardest cross-cutting problem. Each step compounds.

If you can't answer it, the framework is infrastructure for an intelligence that has nothing to be intelligent about. That's the line between building something new and narrating a cost cut. The world model only works if there's something worth modeling.